In an effort to be transparent with the University of Tennessee, Knoxville community, our team has created QEP Impact Reports with data pertaining to the Experience Learning QEP Initiative. It is our hope that in releasing such data, the UT community will have a better understanding of how Experience Learning opportunities are making a difference for our students, faculty and staff.

Direct assessment is critical for evaluating Experience Learning’s impact on student learning at the University of Tennessee, Knoxville. The UT QEP uses a series of rubrics designed around each of the student learning outcomes and associated benchmarks as direct assessment of student learning. The rubrics are adapted from the Association of American Colleges and Universities’ Valid Assessment of Learning in Undergraduate Education (VALUE) rubrics.

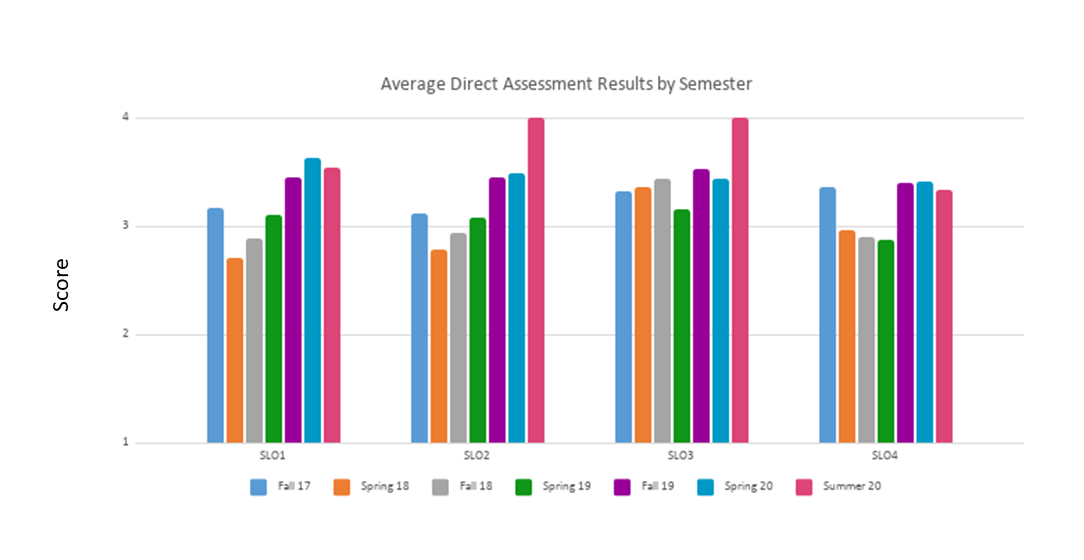

Each rubric is intended to evaluate students’ level of competence across key domains such as critical and creative thinking, global learning, oral communication, teamwork, and quantitative reasoning. The rubrics have demonstrated good reliability and validity and are popular tools utilized by institutions throughout the United States. We adopted these rubrics and adapted them for use as tools to evaluate our four student learning outcomes (SLOs). The Direct Assessment is disseminated electronically to faculty for them to evaluate each participating student after the course/program is completed. Results from the direct assessment rubric are displayed in the figure below.

The figure includes the average student scores related to each of the SLOs across seven semesters (fall 2017 – summer 2020). The rubric were scored by instructors who taught experiential learning courses and scored only students in their classroom.

Indirect assessments complement direct assessments by measuring changes in attitudes, beliefs, and behaviors resulting from experiential learning. The QEP’s indirect assessment is critical for assessing changes in student learning, which are best reflected in the attitudes and dispositions of students. Whereas the previously described rubrics assess student learning in QEP-related classes, a series of indirect assessment tools evaluated Experience Learning’s influence on the campus community and student learning.

The indirect assessment is a pair student surveys that measure students’ perceptions of their own learning and attainment of the student learning outcomes (SLOs) and benchmarks at the beginning and end of the semester. This method builds upon the rubrics used for direct assessment by providing another opportunity for students to engage in structured reflection as part of their learning process. The Pre-Experience Student Survey is disseminated electronically to faculty to distribute to students to complete during the first weeks of the semester. The Post-Experience Student Survey is disseminated electronically to faculty to distribute to students to complete during the final weeks of the semester. The surveys share corresponding items to measure student growth and perceptive attainment during an experiential learning course.

The surveys measure students’ perceived attainment of SLO 1 – students will value the importance of engaged scholarship and lifelong learning; SLO 2 – students will apply knowledge, values, and skills in solving real-world problems; SLO 3 – Students will work collaboratively with others; SLO 4 – Students will engage in structured reflection as part of the inquiry process. Students are asked to quantify their level of agreement on a 7-point scale (i.e., strongly disagree, disagree, somewhat disagree, neither agree nor disagree, somewhat agree, agree, strongly agree) on each item.

The surveys were given to students who were enrolled in a course that was redesigned as part of our Experience Learning Faculty Fellows Program during the first four semesters (fall 2017 – spring 2019). After the Faculty Fellows Program ended, the surveys were distributed to students in Experiential Learning Designated Courses (S, R, N- designated courses) during the last three semesters (fall 2019 – summer 2020). Note that no courses redesigned during the Faculty Fellows Program were not offered during summers. Results for the Pre-Experience Student Survey and Post-Experience Student Survey during the QEP are found in the figure below.

The figure includes the average score of the Pre- and Post-Experience Student Survey for each semester and SLOs. In addition, a dependent samples t-test was conducted between pre- and post-surveys for each semester and SLO to determine if statistically significant changes occurred from the beginning of the semester and end.